Performance

Performance is rarely determined by a single factor. Instead, it emerges from how application code, data storage, content delivery, and infrastructure interact with each other.

Performance varies across systems and environments. The usual industry practice is to instrument the system, observe how it behaves, and then draw conclusions. Improving performance is rarely a one-time effort; it is an iterative process over time. The frequency of this iteration often depends on how frequently the application is released to production.

Performance is rarely something that can be “added later.” It is shaped by decisions made throughout the system—sometimes long before the first bottleneck appears. What looks efficient in a small environment may behave very differently at scale. Recognizing this early helps engineers design systems that are resilient to growth and change.

Understanding Performance Through Measurement

In everyday life we often measure efficiency using simple metrics. For example, the efficiency of a car is commonly measured in miles per gallon, which tells us how much distance a vehicle can travel using a given amount of fuel.

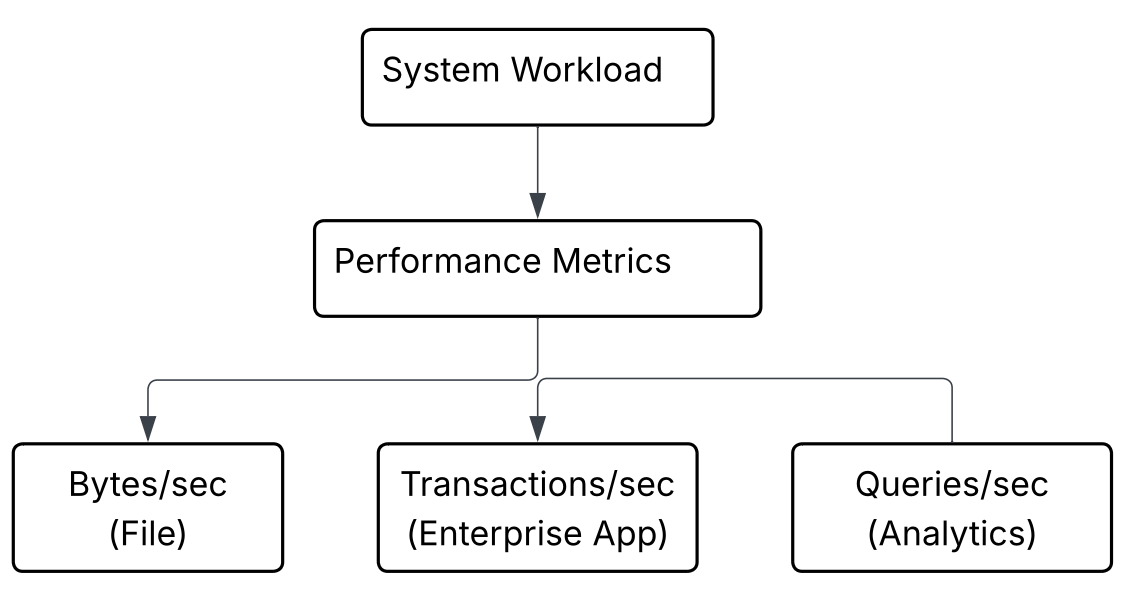

Computing systems use similar measurable indicators. The exact metric depends on the type of system being evaluated.

For example:

- File transfer systems often measure performance in bytes per second, indicating how quickly data moves between systems.

- Enterprise applications frequently measure performance in transactions per second (TPS), representing how many business operations can be processed in a given time.

- Analytical systems often evaluate performance using queries per second, which indicates how many analytical requests the system can handle.

These measurements provide a practical way to understand how efficiently a system operates.

Layers of Performance

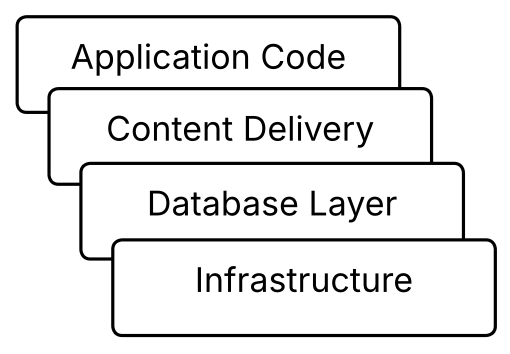

When analyzing performance, it is useful to look at several layers of a system. Historically, performance discussions often revolve around three primary areas:

- Application code

- Content delivery

- Database storage

In modern systems, infrastructure also plays a significant role. Networks, servers, storage systems, and caching layers all contribute to the overall performance experienced by users.

Code-Level Performance

When writing application code, developers should be able to recall fundamental algorithmic efficiency techniques. Even if the exact implementation is not immediately remembered, recalling the method or pattern is often enough to evaluate whether the current approach is efficient. Modern tools, including AI systems, can help validate or refine the implementation once the developer identifies the appropriate technique.

Concepts such as data structures and Big-O complexity help developers reason about how code behaves as input sizes grow.

However, improving performance is not limited to selecting algorithms. Developers must apply appropriate algorithmic techniques and structure data efficiently. In many situations this also involves indexing data in advance, allowing the system to retrieve information quickly instead of repeatedly scanning large datasets.

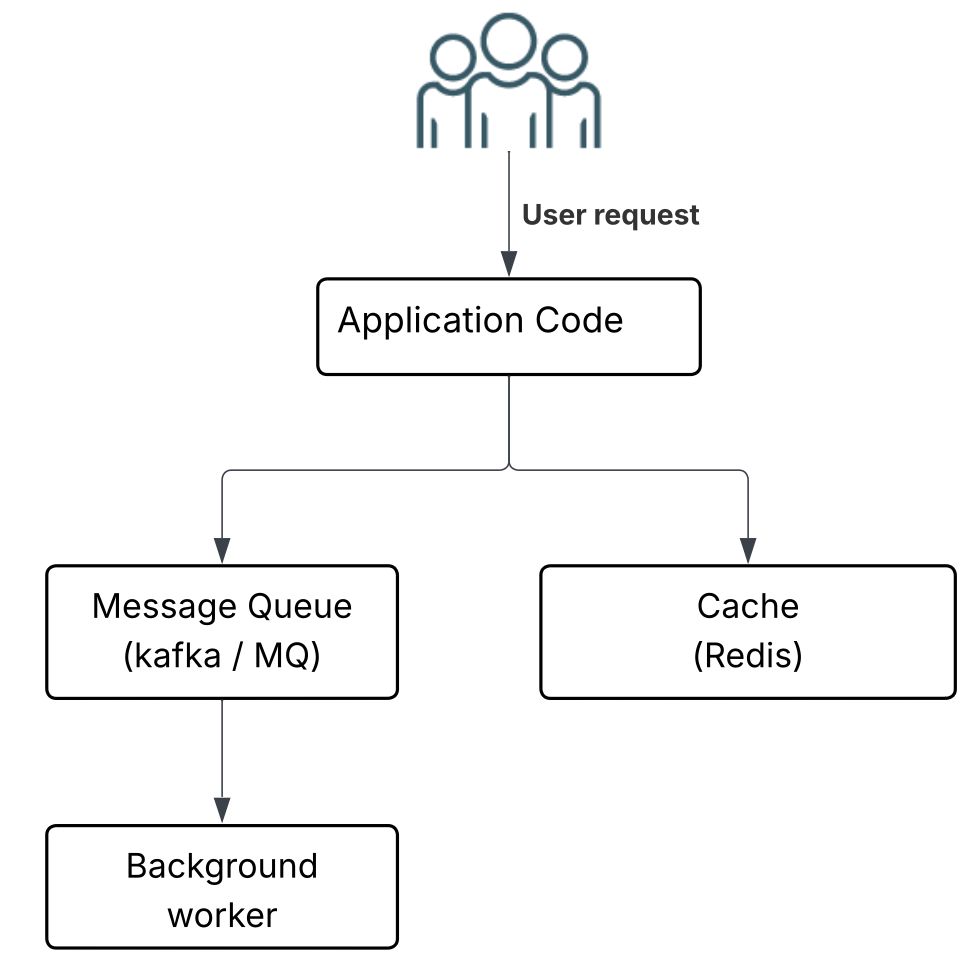

Beyond algorithm design, modern systems often rely on architectural techniques to manage workload effectively. For example:

- Delegating heavy or long-running tasks to asynchronous processing systems such as message queues or event streams (for example Kafka).

- Using concurrency techniques to process multiple tasks simultaneously rather than sequentially.

- Offloading expensive I/O operations to background workers while keeping the main application responsive.

Caching Strategies

Caching plays an important role in improving performance across multiple layers of a system.

Developers may use several caching approaches, including:

- Browser caching or local storage

- Application-level caching

- Distributed caching systems such as Redis

Caching systems often include expiration and purge mechanisms to ensure that stale data does not persist indefinitely. However, flushing an entire cache is often inefficient. A more effective approach is designing caching strategies that allow targeted invalidation, where only the specific content or cache slot that has changed is purged rather than clearing the entire cache.

This approach prevents unnecessary recomputation and reduces the load on the underlying system.

Infrastructure Evolution

Performance improvements do not occur only at the application level. Infrastructure has also evolved significantly over time.

For example, telecommunications networks have progressed from 2G to 3G, 4G, 5G, and beyond, with each generation improving data transmission speed and reliability. A similar evolution has occurred within server infrastructure and cloud platforms.

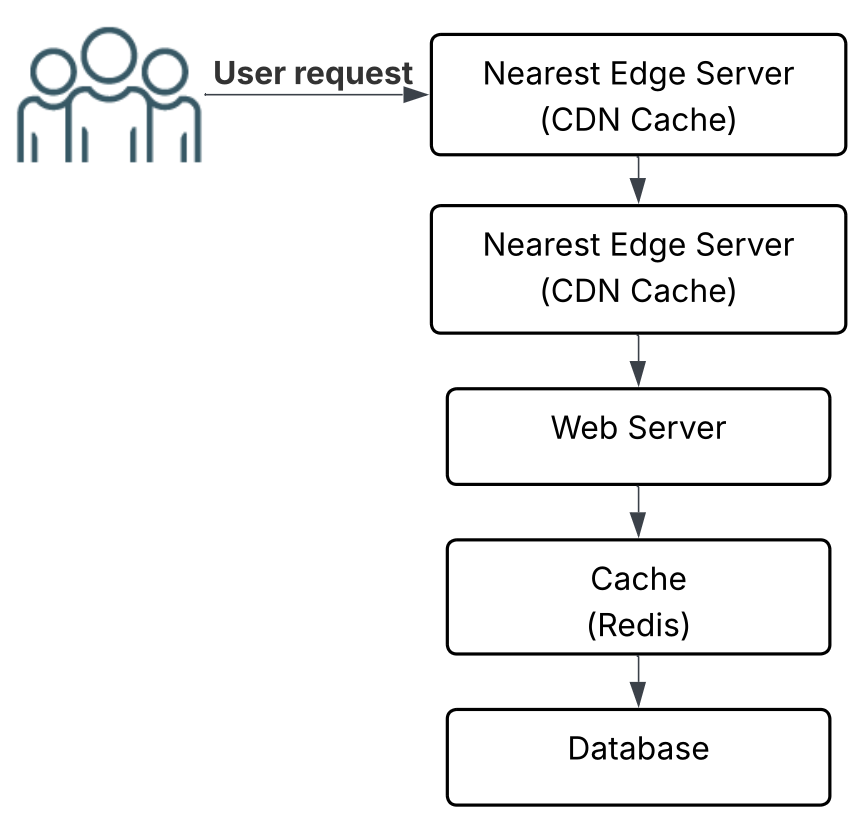

Modern architectures increasingly rely on distributed caching and edge delivery systems, where content is stored closer to the user and served from the nearest available server. This reduces latency and allows applications to scale more efficiently.

Memory Awareness

To better assess application performance, developers often need to understand how memory is used by the system. While memory analysis may appear complex, it fundamentally involves measuring how much memory different parts of the application consume.

In Java environments, one useful starting point is JOL (Java Object Layout), which helps estimate object size and memory layout.

However, object layout tools do not account for all memory used by the JVM. Additional components contribute to overall memory usage, including:

- Metaspace (class metadata)

- Code cache used by the JIT compiler

- Thread stacks

- Direct or off-heap buffers

- Other internal JVM structures

To understand the real memory baseline of an application, developers often analyze metrics such as the heap live set, heap configuration, and memory allocation patterns.

Content and Page Composition

Content also affects performance, particularly images and static assets.

For example, if a homepage contains multiple images, the browser must generate several network requests to retrieve them. In addition, there may be additional background requests for navigation elements, JavaScript files, CSS stylesheets, and other resources required to render the page.

Although the user may interact with a single page, the browser often performs many operations behind the scenes. Proper caching strategies and optimized content delivery can significantly reduce these repeated requests.

Database Design

Database structure is another key factor in system performance. Important considerations include:

- Table structure

- Indexes

- Constraints

- Relationships between tables

- Data distribution

- The type of database engine used

Well-designed database structures allow queries to execute efficiently, while poorly designed schemas can quickly become a performance bottleneck.

Infrastructure

Another area that is sometimes overlooked is server configuration and infrastructure design.

Performance is influenced not only by application code but also by factors such as:

- Server hardware

- Memory capacity

- Storage performance

- Network bandwidth

- System configuration

In practice, performance improvements often emerge from collaboration between application developers and infrastructure engineers.

Database Choice and Business Workload

Another important factor is the type of database used by the application. Different systems handle different types of workloads, and selecting the appropriate database technology should align with the nature of the business.

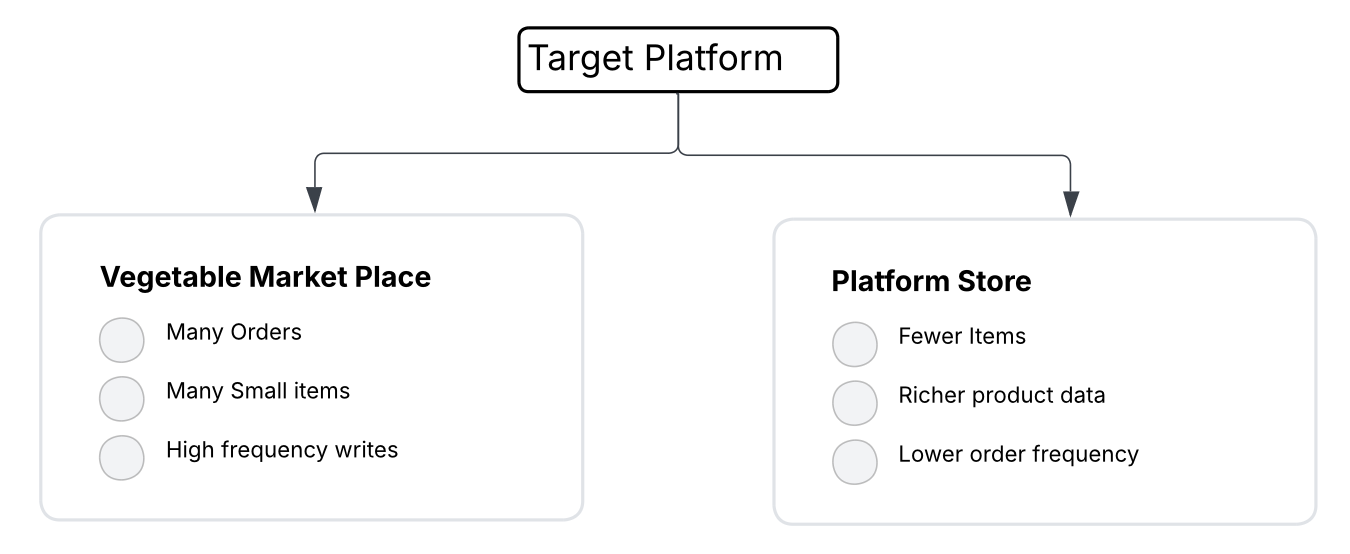

For example, consider two simple scenarios:

- A grocery or vegetable marketplace where orders contain many low-priced items and transactions occur frequently.

- A specialty retail store selling products such as perfume, where orders may contain fewer items but the product catalog may contain richer descriptions and attributes.

Both applications are transactional systems, but their data access patterns and storage needs can differ. Systems that process large volumes of transactions often prioritize fast inserts, efficient indexing, and quick lookups, while other systems may focus more on managing detailed product data or relationships.

Because of this, database selection and schema design should always reflect the business workload and operational patterns of the application.

Web Server Choice

Another factor that can influence performance is the web server used to run the application. Different servers have different design philosophies and performance characteristics.

Some servers are optimized for event-driven, non-blocking architectures, while others rely on thread-per-request models. The choice of server can influence how efficiently the system handles concurrent requests.

Examples include:

- Traditional web servers such as Apache HTTP Server

- Event-driven servers such as Nginx

- Application-embedded servers such as Tomcat or Netty

Selecting the appropriate server and configuring it correctly can significantly improve how an application handles incoming traffic.

Transactional vs Analytical Workloads

In database systems, workloads are often described using two broad categories: OLTP and OLAP.

OLTP (Online Transaction Processing) systems focus on handling a large number of small transactions. These systems typically require fast inserts, updates, and lookups, and they are commonly used in applications such as e-commerce platforms, payment systems, and order management systems.

OLAP (Online Analytical Processing) systems focus on analyzing large volumes of data. They are typically used for reporting, business intelligence, and data analysis, where queries may scan large datasets to generate insights.

Most business applications are primarily OLTP systems, while analytical workloads are often handled separately through reporting pipelines or data warehouses.

Understanding whether a system is primarily transactional or analytical helps guide decisions around database design, indexing strategies, and performance optimization.

Feeling exhausted already? That’s perfectly normal. Performance can feel complex because it spans architecture, infrastructure, and real-world usage patterns. But that is exactly why it deserves attention from the very beginning. When performance becomes part of the earliest discussions around a requirement, it becomes easier to anticipate problems and build systems that remain dependable as they evolve.

Have a Question or Insight?

If something here sparked a thought or raised a question, feel free to reach out.

Contact Me